Code Signing Compromise Installs Backdoors on Thousands of ASUS Computers

Attackers compromised an update server and signed their malware before pushing it to thousands of devices.

For most organizations, right after failed audits/compliance penalties, a compromised code signing certificate is the biggest threat posed by digital certificates and their management.

That’s because code signing serves such a vital function. A verified digital signature is tantamount to instant trust. And when managed and secured responsibly, that facilitates downloads, pushes software updates and helps everything continue to run smoothly throughout all stages of a product’s life-cycle.

When it’s managed poorly – specifically when key security is bungled – it leads to millions of customers having malware pushed on to their computers, which is what happened to Taiwanese tech company ASUS last year.

While this is far from the first time this kind of incident has occurred, it does serve as an excellent reminder of the importance of proper key/certificate management, as well as the perils of poor private key security.

So, today we’re going to talk about that and we’ll touch base with a subject matter expert from Sectigo on what could have been done better.

Let’s hash it out.

The Russians are just better at naming cyber attacks

Before we get into the actual technical side of what’s happened, I’d once again like to hit a common refrain – something I lament all the time – and that’s that the rest of us need to step up our naming conventions if we want to compete with the Russians.

Kaspersky, everyone’s favorite Moscow-based cybersecurity firm, and alleged sometimes-partner to the Russian GRU, was the first to report on the ASUS compromise. It has a paper and a presentation slated for a security conference in Singapore next month, and it has dubbed this fiasco, “Operation ShadowHammer.”

In January 2019, we discovered a sophisticated supply chain attack involving the ASUS Live Update Utility. The attack took place between June and November 2018 and according to our telemetry, it affected a large number of users.

We’re going to get into the specifics of the attack momentarily, but Operation ShadowHammer sounds like the working title of a James Bond film. A couple years ago when Russia attacked Ukraine’s power grid, it was dubbed, “Crash Override,” which is the pseudonym used by the name character in the wonderfully campy 90s movie, Hackers.

Those are names that can put a little sweat on that brow, as opposed to names like EternalBlue, which sounds like an off-brand men’s fragrance, or Stuxnet – a potential name for a local public access channel. It’s trivial, but let’s not pretend like you wouldn’t perk up a little more at the mention of “Operation ShadowHammer” than “WannaCry.”

Explain Operation ShadowHammer to me like I’m five

Let’s start at the top with code signing, and what specifically went wrong here for ASUS – then we can start picking at what it all means. Code signing is the practice of affixing a digital signature to a script or executable – a piece of code – which works as an identifier and a monitor for end users.

Let’s say you want to publish a piece of software. You’ve finished designing it and coding it, now all that’s left is you need to distribute it on the internet. But there’s a problem. This is the internet – nobody trusts anybody. In a perfect world, end users would have no problem trusting in your good nature and downloading your software. But again, this is definitely NOT a perfect world – this is the internet.

That’s why we have code signing, which operates on the same principles as any PKI certificate. A trusted certificate vets the organization or individual before issuing them a code signing certificate that can be used to affix a unique digital signature to scripts and executables. When a piece of software is signed, it asserts the publisher’s identity and serves as a monitor, acting as a checksum in case the software is tampered with.

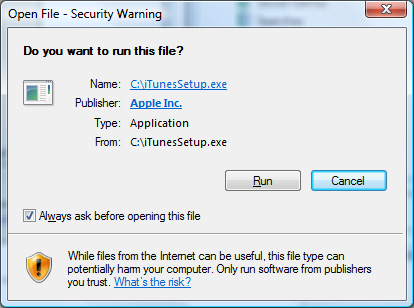

Here’s an example of how an end user’s system handles a signed piece of software.

Now, ASUS was using this Code Signing certificate a little bit differently, it was using it to sign software updates. This is another use-case for code signing, by signing updates to software with a trusted key, it safeguards users from downloading malware that’s masquerading as a legitimate update.

Unless the update is signed by ASUS, it isn’t trusted by the ASUS device (unless the user manually decides to accept it – at which point all bets are off).

With that cursory explanation of Code Signing out of the way, let’s get down to what happened. ASUS had one of its update servers compromised, and residing on said server were at least two of ASUS’ signing keys. If an attacker can get access to those keys, it can sign malware and distribute it through ASUS’ update channels as a legitimate update.

This was a very targeted campaign, as was first reported by Motherboard. The attackers had a list of 600 MAC addresses they were attempting to install backdoors on, all hashed in an attempt to obfuscate their intentions.

Any time the malware infected a machine, it collected the MAC address from that machine’s network card, hashed it, and compared that hash against the ones hard-coded in the malware. If it found a match to any of the 600 targeted addresses, the malware reached out to asushotfix.com, a site masquerading as a legitimate ASUS site, to fetch a second-stage backdoor that it downloaded to that system. Because only a small number of machines contacted the command-and-control server, this helped the malware stay under the radar.

What makes this worse is the fact that there were actually two code signing key compromises involved in this incident. The first certificate expired in the middle of 2018. So, the attackers began using another valid one that had apparently been saved on the same compromised server.

That shouldn’t happen.

How could this code signing compromise have been stopped?

There are a few ways ASUS could have avoided this situation. The first would have been with an on-demand code signing arrangement, one in which it wasn’t charged with physical security. We caught up with Sectigo’s Tim Callan, he explained it thusly:

“[Our offering,] Code Signing on Demand, which is integrated with SCM (Sectigo Certificate Manager) provides a protection for this. There are 3 levels of it:

- “In the most secure deployment scenario, private keys are stored in our cloud in a HSM or an encrypted database. We follow strict security practices and our data center goes through regular audit and compliance check. We feel that it provides a better security than many enterprise environment.

- “The customer’s SCM admin defines the developer accounts who may have access to the code signing task. The developers themselves do not possess the private key.

- “When the developer signs a piece of code, by default, the admin have to approve or deny it. This provides another level of check for the validation of the signing operation.”

A company the size of ASUS is unlikely to want to relinquish control over its keys and ability to sign unilaterally. It’s just too big for that. So while this solution would certainly be helpful for smaller software developers, it likely wouldn’t have been an option for ASUS.

So, it would come down to key security. Under optimal circumstances signing keys should be stored on external physical hardware tokens, that wasn’t the case here:

“A HW token or storing the key in an HSM provides a better protection, says Callan. “Some people do not use them for non-EV for cost concerns. In that case, it is password protected which is susceptible to compromise. Where possible, the private key file should be protected by a 2FA.”

So, that leaves one last question that’s germane to our interest in this compromise: what needs to be done to remediate?

Obviously there will need to be a revocation of said certificate, and it will need to be replaced. There will also need to be an informational campaign to help get ahead of the news cycle and inform customers of any potential risks. Kaspersky managed to reverse hash most of the MAC addresses so it’s likely ASUS will need to contact those customers directly.

Beyond that:

“The developer’s password must be reset or his/her account revoked/replaced,” says Callan. “They will also have to find how the compromise occurred and tighten the security around the causes.”

Obviously, there’s a lot more going on here than just a compromised signing key, but make no mistake – those keys were the biggest weapon in this attack.

The ability to pass off malware as a legitimate piece of software undermines the entire concept of code signing, which is why it’s so guarded again.

And while a company like ASUS might be too big to offload its signing functions to a third-party company like Sectigo, that doesn’t mean it can afford to shirk on key security – or just good security, in general. If anything, the decision to handle things in-house warrants an even more stringent approach to security.

This isn’t ASUS’s first issue, either. And if it doesn’t learn from this it’s not likely to be its last.

As always leave any comments or questions below…

5 Ways to Determine if a Website is Fake, Fraudulent, or a Scam – 2018

in Hashing Out Cyber SecurityHow to Fix ‘ERR_SSL_PROTOCOL_ERROR’ on Google Chrome

in Everything EncryptionRe-Hashed: How to Fix SSL Connection Errors on Android Phones

in Everything EncryptionCloud Security: 5 Serious Emerging Cloud Computing Threats to Avoid

in ssl certificatesThis is what happens when your SSL certificate expires

in Everything EncryptionRe-Hashed: Troubleshoot Firefox’s “Performing TLS Handshake” Message

in Hashing Out Cyber SecurityReport it Right: AMCA got hacked – Not Quest and LabCorp

in Hashing Out Cyber SecurityRe-Hashed: How to clear HSTS settings in Chrome and Firefox

in Everything EncryptionRe-Hashed: The Difference Between SHA-1, SHA-2 and SHA-256 Hash Algorithms

in Everything EncryptionThe Difference Between Root Certificates and Intermediate Certificates

in Everything EncryptionThe difference between Encryption, Hashing and Salting

in Everything EncryptionRe-Hashed: How To Disable Firefox Insecure Password Warnings

in Hashing Out Cyber SecurityCipher Suites: Ciphers, Algorithms and Negotiating Security Settings

in Everything EncryptionThe Ultimate Hacker Movies List for December 2020

in Hashing Out Cyber Security Monthly DigestAnatomy of a Scam: Work from home for Amazon

in Hashing Out Cyber SecurityThe Top 9 Cyber Security Threats That Will Ruin Your Day

in Hashing Out Cyber SecurityHow strong is 256-bit Encryption?

in Everything EncryptionRe-Hashed: How to Trust Manually Installed Root Certificates in iOS 10.3

in Everything EncryptionHow to View SSL Certificate Details in Chrome 56

in Industry LowdownA Call To Let’s Encrypt: Stop Issuing “PayPal” Certificates

in Industry Lowdown