Bad Bots: What They Are and How to Fight Them

Bad internet bot traffic rose by 18.1% in 2019, and it now accounts for nearly one-quarter of all internet traffic

The figure above, which comes from Imperva’s 2020 Bad Bot Report, should come as a warning to all internet users, especially companies and organizations who maintain their own infrastructure online to take this problem seriously.

The pervasiveness of malicious bots (or “bad bots” for short) not only places additional strain on networks and leads to additional infrastructure costs, it also indicates rampant cyberattacks and malicious activities that are committed by cybercriminals and threat groups.

Imperva’s report even reveals concerning trends such as attempts to rebrand bad bots as legitimate services, the growth of massive credential stuffing attacks being carried out by malicious actors using bots, and the increasing complexity of bot operations. Considering these developments, it’s only prudent to understand what bad bots are and how to fight them.

Let’s hash it out.

What Are Internet Bots and How Are They Used?

Simply put, internet bots are software applications that are designed to automate many tedious and mundane tasks online. They’ve become an integral part of what makes the internet tick and are used by many internet applications and tools.

For example, internet search engines like Google rely on bots that crawl through web content in order to index information. Bots go through millions of web pages’ text to find and index terms that these pages contain. So, when a user searches for a particular term, the search engine will know which pages contain that particular information.

Travel aggregators use bots to continuously check and gather information on flight details and hotel room availabilities so that they can display the most up-to-date information for users. This means that users no longer need to check different websites individually. The aggregators’ bots consolidate all of the information, allowing the service to display the data all at once.

Thanks to developments in artificial intelligence and machine learning, bots are also being used to complete more complex tasks. Business intelligence services use bots to crawl through product reviews and social media comments to provide insights on how a particular brand is perceived.

How Bots Can Positively (and Negatively) Impact Your Organization

Imagine if these tasks were done manually by a human. It would be quite a slow and error-prone process. By using bots, these tasks are completed quickly and more accurately. This frees up your organization’s “human assets” to collaborate and focus on higher-level projects and goals.

Bots have an impact on the infrastructure of websites and applications they come into contact with. Since bots essentially “visit” websites, they consume computing resources such as server loads and bandwidth. Because of this, even these good bots can inadvertently cause harm. An aggressive search engine or aggregator bot can take down a site with limited resources. Fortunately, proper site configuration can prevent this from happening.

What Are Bad Bots?

In general, bot activity is already something that most organizations have been dealing with for years. However, what’s worrisome is the traffic that comes from the “bad bots” — the bots that have been appropriated by malicious actors to serve as tools for various hacking and fraud campaigns.

The most common uses for bad bots include:

- Web scraping — Hackers can steal web content by crawling websites and copying their entire contents. Fake or fraudulent sites can use the stolen content to appear legitimate and trick visitors.

- Data harvesting — Aside from stealing entire websites’ content, bots are also used to harvest specific data such as personal, financial, and contact information that can be found online.

- Price scraping — Product prices can also be scraped from ecommerce websites so that they can be used by companies to undercut their competitors.

- Brute-force logins and credential stuffing — Malicious bots interact with pages containing log-in forms and attempt to gain access to sites by trying out different username and password combinations.

- Digital ad fraud — Hackers can game pay-per-click (PPP) advertising systems by using bots to “click” on ads on a page. Unscrupulous site owners can earn from these fraudulent clicks.

- Spam — Bot can also automatically interact with forms and buttons on websites and social media pages to leave phony comments or false product reviews.

- Distributed denial-of-service attacks — Malicious bots can be used to overwhelm a network or server with immense amounts of traffic. Once the allotted resources are used, sites and applications supported or hosted by the network will become inaccessible to legitimate users.

Hackers are also becoming more sophisticated and creative in how they use these bots. To start, they’re designing bots that are capable of circumventing conventional bot mitigation solutions, thus making them harder to detect. Some enterprising parties even create seemingly legitimate services out of bad bots. Bots can be used to help buyers get ahead of queues in time-sensitive transactions such as buying limited edition products or event tickets.

Hackers can perform these activities on a massive scale because through the use of massive botnets, which are networks composed of devices capable of running bots. Many of these devices are compromised from previous hacks. The Mirai botnet, which is responsible for several massive denial-of-service attacks, is composed of tens of thousands of compromised internet-of-things (IoT) devices such as IP cameras and routers.

To put it succinctly, industries are suffering from these bad bots. According to the same Imperva report, the hardest hit sectors are financial services (47.7%), education (45.7%), IT and services (45.1%), and marketplaces (39.8%) — industries where bots look to breach accounts through brute force, steal intellectual property, and scrape prices, respectively.

Malicious Bots: How Do We Fight Them?

Falling victim to bad bots can have serious consequences. Aside from the computing resources it consumes, bot traffic can affect business performance. Price scraping can leave businesses at a pricing disadvantage against their competitors. Content scraping can hurt search rankings. Spam can affect a site’s image and credibility in the eyes of search engines.

Getting breached can open up networks to other forms of cyber attacks including data theft and ransomware. Clearly, steps must be taken to prevent them from running rampant.

Here are three critical measures to fight back against these bad bots:

1. Recognize the Problem

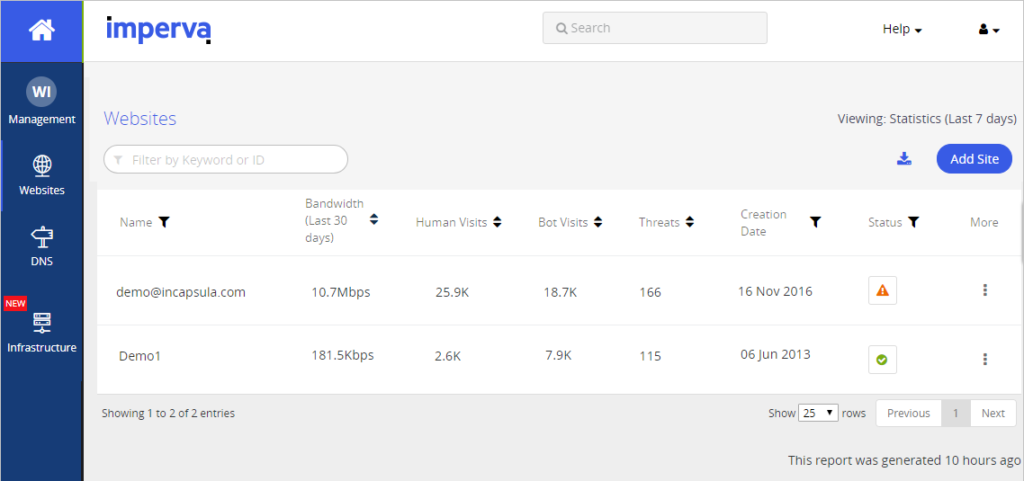

Organizations must be proactive in dealing with bad bots. This starts with recognizing and identifying the problem. IT teams can assess if their networks are being attacked by bots by taking a look at their web analytics and review their traffic.

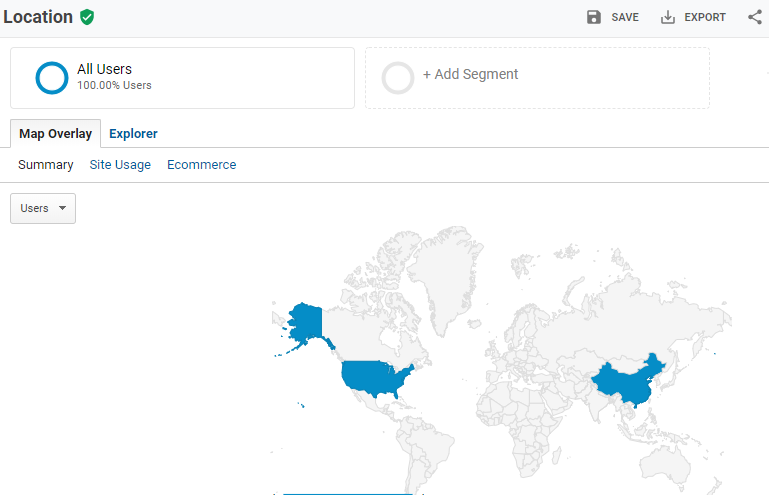

Spikes in bandwidth consumption and log-in attempts can be signs of increased bot activities. Traffic from unusual countries of origin can also hint at bad bots probing a site for vulnerabilities. Checking IP addresses and geolocations of traffic sources can reveal potential bot activity.

Business performance can also be an indication of malicious bot activity. For instance, a sudden drop in conversion rates for ecommerce sites can allude to price scraping.

2. Employ Defensive and Protective Measures

It’s critical for organizations to adopt and enhance cybersecurity measures that protect their respective infrastructure. Among the best practices to implement are:

- Using robots.txt. A robots.txt file placed in the index of a website can prevent bots such as search engine crawlers from overloading it with requests. The file essentially tells bots which pages are to be included in the crawl. However, it’s important to note that using robots.txt only helps with mostly legitimate crawlers that support such directives and doesn’t necessarily keep bad bots out. Still, this can help prevent overly aggressive crawlers from taking sites down.

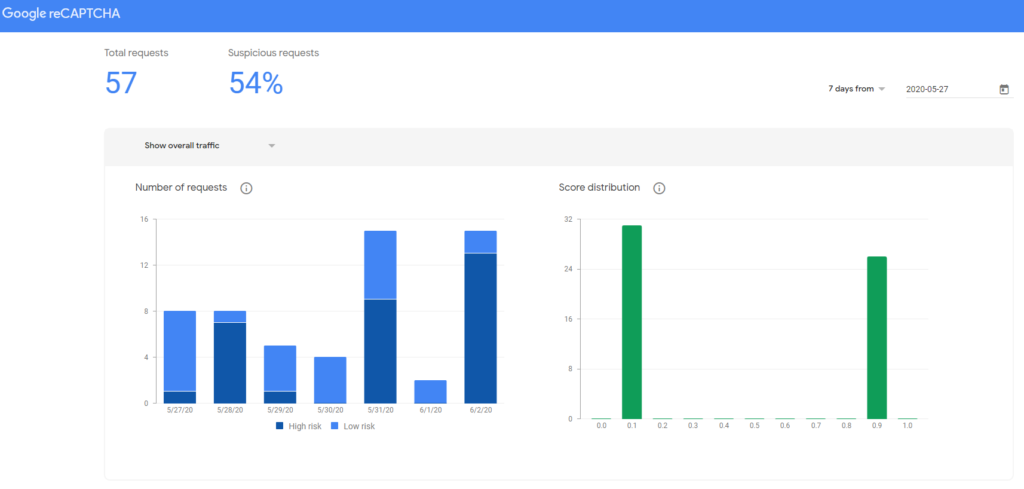

- Using challenges to distinguish between human users and bot traffic. Bots can be programmed to automatically fill out forms to spam or credential stuff websites and web applications. Using challenges that require human input or user validation such as CAPTCHA can help prevent bots from properly executing their intended hacks.

- Adopting network protection solutions. In most cases, it’s best for organizations to invest in more advanced forms of protection. Cloud application security solutions and cloud-based web application firewalls (WAFs) now employ advanced methods to stop bot traffic from even interacting with a site. These solutions are capable of identifying and blocking bots according to their behaviors, origins, and signatures. Some industry-leading solutions are even capable of preventing massive DDoS attacks from causing any downtime to sites under their protection.

- Deploying strict access controls. Multi-factor authentication requires users to provide additional credentials such as one-time-passwords (OTP). These can be implemented to deter bot attacks such as credential stuffing. Using identity and access management (IAM) also allows administrators to strictly define which resources within their network can be accessed by specific user accounts. This way, in the event that a bot “cracks” the credentials of one account, its access to the network is still limited (thereby minimizing the potential damage).

3. Monitor and Test Security

It’s important to constantly monitor and test the behavior of all security measures that are put in place. Misconfiguration or faulty implementation does happen. As such, checks like penetration tests and attack simulations should be performed routinely to verify if the measures work as intended. Adopting even the most expensive tools and solutions would only lead to waste if they are improperly configured.

It’s also crucial to test if the measures are having a negative effect on business goals. Poorly configured bot detection can prevent good bots from getting through. Blocking search engine crawlers can instantly tank a site’s ranking. If a site relies on partnerships with aggregators to drive their business, inadvertently blocking aggregator bots can likewise break the service altogether.

Final Thoughts on Bad Bots

Site owners should pay close attention to their traffic considering how malicious bots continue to run rampant. Left unchecked, bad bot traffic can evolve from a nuisance to something more serious such as a full-on cyber attack in no time. Knowing how to mitigate bad bot traffic can help to safeguard your infrastructure and create a more secure internet for everyone.

Recent News Related to Bot Traffic

Updated on January 9, 2021

![A Look at 30 Key Cyber Crime Statistics [2023 Data Update]](https://www.thesslstore.com/blog/wp-content/uploads/2022/02/cyber-crime-statistics-feature2-75x94.jpg)

5 Ways to Determine if a Website is Fake, Fraudulent, or a Scam – 2018

in Hashing Out Cyber SecurityHow to Fix ‘ERR_SSL_PROTOCOL_ERROR’ on Google Chrome

in Everything EncryptionRe-Hashed: How to Fix SSL Connection Errors on Android Phones

in Everything EncryptionCloud Security: 5 Serious Emerging Cloud Computing Threats to Avoid

in ssl certificatesThis is what happens when your SSL certificate expires

in Everything EncryptionRe-Hashed: Troubleshoot Firefox’s “Performing TLS Handshake” Message

in Hashing Out Cyber SecurityReport it Right: AMCA got hacked – Not Quest and LabCorp

in Hashing Out Cyber SecurityRe-Hashed: How to clear HSTS settings in Chrome and Firefox

in Everything EncryptionRe-Hashed: The Difference Between SHA-1, SHA-2 and SHA-256 Hash Algorithms

in Everything EncryptionThe Difference Between Root Certificates and Intermediate Certificates

in Everything EncryptionThe difference between Encryption, Hashing and Salting

in Everything EncryptionRe-Hashed: How To Disable Firefox Insecure Password Warnings

in Hashing Out Cyber SecurityCipher Suites: Ciphers, Algorithms and Negotiating Security Settings

in Everything EncryptionThe Ultimate Hacker Movies List for December 2020

in Hashing Out Cyber Security Monthly DigestAnatomy of a Scam: Work from home for Amazon

in Hashing Out Cyber SecurityThe Top 9 Cyber Security Threats That Will Ruin Your Day

in Hashing Out Cyber SecurityHow strong is 256-bit Encryption?

in Everything EncryptionRe-Hashed: How to Trust Manually Installed Root Certificates in iOS 10.3

in Everything EncryptionHow to View SSL Certificate Details in Chrome 56

in Industry LowdownPayPal Phishing Certificates Far More Prevalent Than Previously Thought

in Industry Lowdown