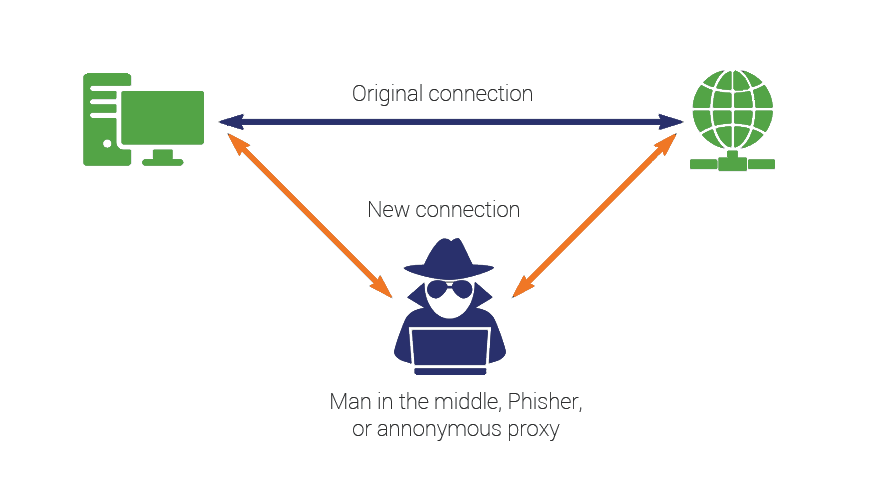

Protecting against Man-In-The-Middle Attacks

Make sure nobody gets in the middle of your connections

SSL/TLS forms the bedrock of modern web security by combining asymmetric and symmetric cryptography in order to achieve secrecy and non-repudiation. This is important when sending sensitive information (credit cards, social security numbers, etc.) via an insecure channel such as the internet. However, improperly implemented SSL/TLS can lead to these secrets being exposed. The biggest classification of threat SSL/TLS protects against is known as a “man-in-the-middle” attack, whereby a malicious actor can intercept communication, and decrypt it (either now or at a later point).

SSL/TLS ensures that the party you are communicating with really is the party they’re claiming to be, and really does have ownership of the domain you’re visiting in Global DNS. This is important, because if the certificate were simply self-signed and you were connected to a website that has gotten between you and the provider, even if the information is secure across the wire, it would be securely sent to the attacker!

It’s important to keep in mind that there are a lot of different layers between the client and the server that an attacker could target. An attacker could run malware on the endpoint itself, sniff for traffic on a layer 2 adjacent network segment and potentially strip SSL/TLS altogether, inject malicious entries into the target’s ARP cache, or even compromise the routing table of the upstream router serving as the gateway for the network.

After the traffic makes it to the internet, a bad actor could tap a telephone pole through which fiber is run, participate in BGP and reroute traffic through nodes that they control, or compromise name resolution by taking control of a DNS server between the client and its destination. All these avenues of attack are considered MITM, and all of them can be mitigated by properly employing and managing SSL/TLS.

Focusing on the Man-in-the-Middle

In 2017, it was discovered that many banking apps from popular banks with a global presence (including Bank of America and HSBC) were vulnerable to man-in-the-middle attacks due to software not properly verifying the chain of trust. Unknown to any of these bank’s members, attacks with access to the networks on which a client was doing their banking could have posed as the bank’s server and harvested balances, credentials, and even 2-factor authentication tokens. Imagine having your life savings wiped out in a matter of hours, with little to no recourse.

As of April 2019, tech titan Google has made an unprecedented decision that shows how seriously they take this threat. As policy going forward, they are forbidding logins from mobile frameworks from being able to sign in to their sites and services. Even though there is nothing malicious about these frameworks themselves, they appear heuristically similar to man-in-the-middle attacks when it comes time for Google to determine if a sign in attempt is legitimate or not. This is a controversial decision, but it puts security first. Going forward, developers are expected to transition to OAUTH tokens for federated login.

HTTP is not the only protocol vulnerable to man-in-the-middle attacks. In 2018, a vulnerability in the Bluetooth protocol was discovered (https://www.kb.cert.org/vuls/id/304725) that allows an attacker to intercept Bluetooth communications encrypted by SSL/TLS. Imagine an attacker intercepting communications as a user paired their phone with their smart lock. Suddenly, it’s not so hard to imagine the risks of insecure communication not only affecting one’s digital life, but one’s home as well.

To a business, MITM attacks represent risk. Their reputations and profitability are at stake. According to the Ponemon Institute, the average cost for any kind of data breach is $4.13 million. This does not necessarily scale linearly with the size of the business. For many smaller businesses that do not clear this kind of revenue at all in a year, a single data breach can mean closing its doors.

Defending against the Man-in-the-Middle

So, how then can a savvy business protect itself from this risk? How can these attack vectors be effectively mitigated? There are both administrative and technical hurdles to overcome.

Deciding as a business on a uniform baseline policy for SSL/TLS is an effective way of making sure individual business units don’t make these decisions in a vacuum. There is always a trade-off between compatibility with older devices and security of data transfer.

A banking institution may well determine that only TLS 1.2, with only the strongest ciphers, and Perfect-Forward-Secrecy across the board is the way to go. They would also likely already be evaluating a move to TLS 1.3. To their business segment, the lack of compatibility with older clients is worth the security of knowing that even 100 years in the future – or at least until quantum computing becomes viable – this information will be kept secret.

A deli that accepts online orders however, may determine that compatibility is more important, and that all things considered, a data breach isn’t on the top ten list of things that could prove a death knell for the business. Regardless, whether you’re a bank or a purveyor of meats, user training is an equally important piece of the puzzle.

A recent academic study conducted by doctors from several prestigious US and UK university over 8 years found that employee training really does work. Over the course of multiple simulations across several years employees showed decided improvement in terms of being able to identify threats and escalate them properly.

Moving on, in order to make effective decisions, the decisionmaker either needs to be knowledgeable, or be able to delegate to someone that is. For bigger businesses, this might mean training users to look for the lock in their browser when making an online purchase. For the deli owner, the best decision is probably not to host the online services in their nephew’s basement, but rather to enlist the services of a professional.

TLS 1.3 vs the Man-in-the-Middle

For security professionals, staying current on the current threat landscape and up-to-date on the latest mitigations against these threats is paramount. In August of 2018, TLS 1.3 was finalized in RFC 8446. With it, perfect forward secrecy is no longer a cipher-level decision, but mandated in the protocol specification.

Session-resumption, a feature of TLS 1.0 thru 1.2, which was prone to implementation weaknesses was removed entirely in favor of pre-shared keys.

One controversial aspect of TLS 1.3 that will likely hinder its adoption is that there are new considerations regarding forward proxies. Many organizations, in order to provide gateway antivirus protection and protect against the theft of intellectual property, perform deep packet inspection. If you see that certificates your browser trusts on a company owned device are signed not by the site you’re visiting, but by your company’s firewall instead, this means that the company is using this kind of technology.

While TLS 1.3 can in fact be intercepted by a company for this purpose (because they control the root certificate store on the endpoint as well as the route the traffic takes), many existing software implementations relied on the TLS 1.0 – TLS 1.2 handshake in order to provide this deep packet inspection. Companies in order to support TLS 1.3 at the very least will have to upgrade the firmware on their devices, and in some cases even purchase new hardware in order to inspect the traffic in a different way. This mostly affects companies using implicit deep packet inspection. Explicit proxies instead rely on CONNECT messages, which operate largely the same way with TLS 1.3.

This practice of intercepting HTTPS communication is controversial. It intentionally breaks the foundation of encrypted communications and introduces additional points in the chain where an attacker could insert themselves towards malicious ends. Simultaneously, being able to inspect this data forms the bedrock of many organizational policies that inspect payload data for things like sending social security numbers, credit card numbers, or protected trade secrets via unexpected channels. Much like Google’s decision to disallow authentication from well-intentioned mobile frameworks for the betterment of overall security, it would be unsurprising if deep packet inspection itself faces a reckoning in the future.

Final Thoughts

Finally, with public key pinning losing traction, one of the best ways to protect your users is to employ HSTS. This technology works by a browser-based cache that maintains a database of visited websites. If the website identifies itself as leveraging HSTS, the browser will disallow insecure communication to that site. No connections can be made in the future via HTTP, crippling SSL stripping attacks. (Assuming of course, that the user is not being attacked on their very first visit to that site from their computer).

You may also consider adding your website to the HSTS preload list, which is maintained by Google and used by all the major browsers. Even if an internet user has never visited a given site, as long as it’s on the list their browser will know to force an HTTPS connection. This effectively shuts the window on a very narrow attack vector that presents itself upon a user’s first visit – before they have downloaded the HTTP header.

One word of caution though. If you do add your website to the HSTS list and your SSL certificate expires or gets revoked – your site is effectively broken until you replace it. Users will be unable to connect. This happened to the US government during its shutdown when over 80 websites broke due to certificate expiry.

Defending against the Man-in-the-Middle isn’t as simple as just installing an SSL certificate, there are other considerations that need to be made in terms of implementation. Remember, keep your implementations up to date with the latest protocols and the most secure cipher suites. Err on the side of security – not interoperability. And use HSTS.

What do you think? If you have any comments or questions leave them below.

5 Ways to Determine if a Website is Fake, Fraudulent, or a Scam – 2018

in Hashing Out Cyber SecurityHow to Fix ‘ERR_SSL_PROTOCOL_ERROR’ on Google Chrome

in Everything EncryptionRe-Hashed: How to Fix SSL Connection Errors on Android Phones

in Everything EncryptionCloud Security: 5 Serious Emerging Cloud Computing Threats to Avoid

in ssl certificatesThis is what happens when your SSL certificate expires

in Everything EncryptionRe-Hashed: Troubleshoot Firefox’s “Performing TLS Handshake” Message

in Hashing Out Cyber SecurityReport it Right: AMCA got hacked – Not Quest and LabCorp

in Hashing Out Cyber SecurityRe-Hashed: How to clear HSTS settings in Chrome and Firefox

in Everything EncryptionRe-Hashed: The Difference Between SHA-1, SHA-2 and SHA-256 Hash Algorithms

in Everything EncryptionThe Difference Between Root Certificates and Intermediate Certificates

in Everything EncryptionThe difference between Encryption, Hashing and Salting

in Everything EncryptionRe-Hashed: How To Disable Firefox Insecure Password Warnings

in Hashing Out Cyber SecurityCipher Suites: Ciphers, Algorithms and Negotiating Security Settings

in Everything EncryptionThe Ultimate Hacker Movies List for December 2020

in Hashing Out Cyber Security Monthly DigestAnatomy of a Scam: Work from home for Amazon

in Hashing Out Cyber SecurityThe Top 9 Cyber Security Threats That Will Ruin Your Day

in Hashing Out Cyber SecurityHow strong is 256-bit Encryption?

in Everything EncryptionRe-Hashed: How to Trust Manually Installed Root Certificates in iOS 10.3

in Everything EncryptionHow to View SSL Certificate Details in Chrome 56

in Industry LowdownA Call To Let’s Encrypt: Stop Issuing “PayPal” Certificates

in Industry Lowdown