6 Dangers of Generative AI and What’s Being Done to Address Them

The results of generative AI can be seen in the media you consume online and the information you rely on to do your job. But generative artificial intelligence doesn’t come without risks and challenges…

Artificial intelligence (AI) is one of mankind’s greatest achievements; it can be used for everything from solving niche and global issues to streamlining business operations through automation. There’s a category of AI that creates all kinds of incredibly realistic media based on patterns in existing content — everything from technical documentation and translations to hyper-realistic images, video, and audio content. This is called generative AI because it uses existing content datasets to identify patterns and use them to generate new content. (We’ll speak more about the difference between AI and generative AI later.)

Examples of some popular generative AI tools include:

- ChatGPT — a chatbot that uses a wealth of datasets to generate human-like responses and provide information.

- Google Pixel 8’s Magic Editor — an image editing tool that enables users to edit images using generative AI.

- Dall-E 2 — an image and art generator tool that enables users to remove and manipulate elements.

- DeepBrain — a video production and avatar generator tool.

But with all of the “light” and benefits of generative AI, there’s also a dark side that must be discussed. While it’s true that generative AI can be used to create incredible things, it can also be used to cause harm — both intentionally and unintentionally.

What are the generative AI risks and challenges we face as a society, both as individuals and as working professionals? And what are the public and private sectors doing to address these concerns?

Let’s hash it out.

What we’re hashing out…

- The results of generative AI can be seen in the media you consume online and the information you rely on to do your job. But generative artificial intelligence doesn’t come without risks and challenges…

- 6 Generative AI Risks and Challenges

- 1. Using Fake Media to Create False Narratives, Deceive Consumers, and Spread Disinformation

- 2. Generating Inaccurate or Made-Up Information

- 3. Violating Personal Privacy Rights and Expectations

- 4. Using Deepfakes to Shame and Attack Individuals

- 5. Developing New Cyber Attack Channels and Methods

- 6. Generative AI Creates New Intellectual Property and Copyright Infringement Concerns

- 1. Using Fake Media to Create False Narratives, Deceive Consumers, and Spread Disinformation

- An Overview of the Differences Between Generative AI and Traditional AI

- What’s Being Done to Address Generative AI-Based Risks and Concerns

- Final Thoughts on Generative AI Risks and Dangers

6 Generative AI Risks and Challenges

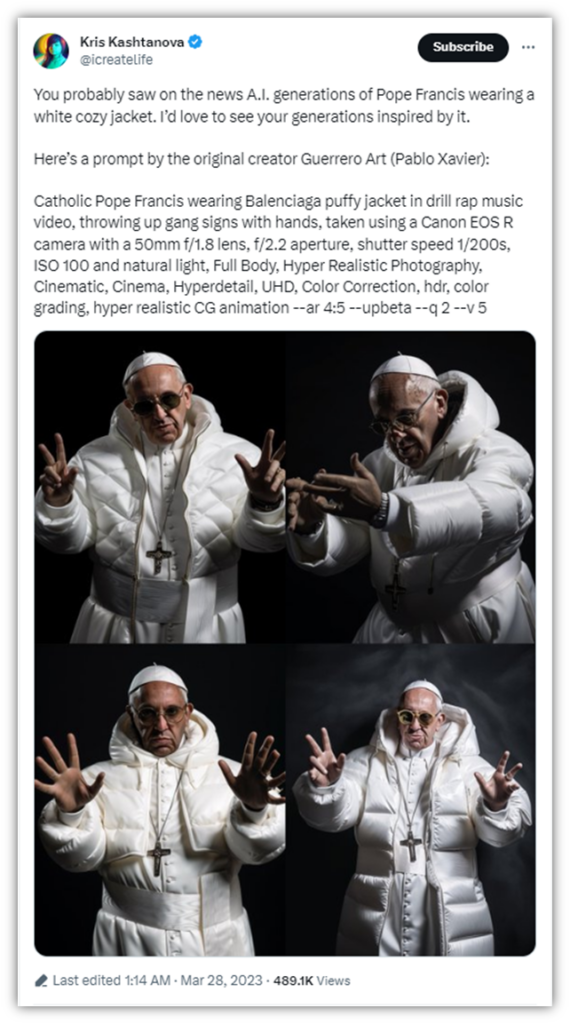

If you’ve seen pictures of Pope Francis wearing a puffy white Balenciaga jacket or the video of Mark Zuckerberg talking about how “whoever controls the ‘dada’ [data] controls the future,” then you’ve seen deepfake content. Deepfake images and videos are just a couple of examples of generative AI content.

Deepfake technology can be used to create fun, entertaining content; however, it can also be used to create content that aims to trick, deceive, and manipulate others. It can be used to get employees to make fraudulent bank transfers or to control public opinion.

Knowing this, it’s time to explore some of the biggest generative AI risks and concerns we’re facing with the ongoing improvements to modern technologies.

1. Using Fake Media to Create False Narratives, Deceive Consumers, and Spread Disinformation

What we see with our eyes and hear with our ears are powerful testaments to authenticity. But what if we can’t believe what we’re seeing or hearing? Imagine that someone creates a fake video of a tragic event that never happened to spur some action. For example, a terrorist attack that never happened, or false information about a political candidate doing something illegal or perceived to be morally or ethically reprehensible to elicit a reaction.

Someone could use phony audio recordings to manipulate opinions about politicians or other public individuals. They could post fake photos of a political candidate in a compromising position or scenario to harm their reputation. Even if it comes out later that the video, image, or audio was fake, it’s too late; the damage is done.

Even advertisements that use generative AI to mislead consumers are a no-no. (One might argue all ads are annoying and misleading, but that’s a debate for another day…)It’s for reasons like these that bipartisan U.S. lawmakers have proposed two pieces of legislation that aim to ban the undisclosed use of AI in election-related media and ads.

- Protect Elections from Deceptive AI Act (S.2770). We’ll speak more about this one later in the article, but the “spoiler alert” is that this legislation aims to prohibit the distribution of deceptive AI-generated media relating to federal political candidates.

- Real Political Advertisements Act (H.R. 3044). This bill aims to create greater transparency and accountability for the responsible use of generative AI content in ads.

2. Generating Inaccurate or Made-Up Information

As with all new technologies, there will be hiccups and bumps along the road. One of the issues facing generative AI technologies is that they’re limited in terms of accuracy. If they’re basing their analyses and creations on inaccuracy or fraudulent information, their outputs also stand to be inaccurate.

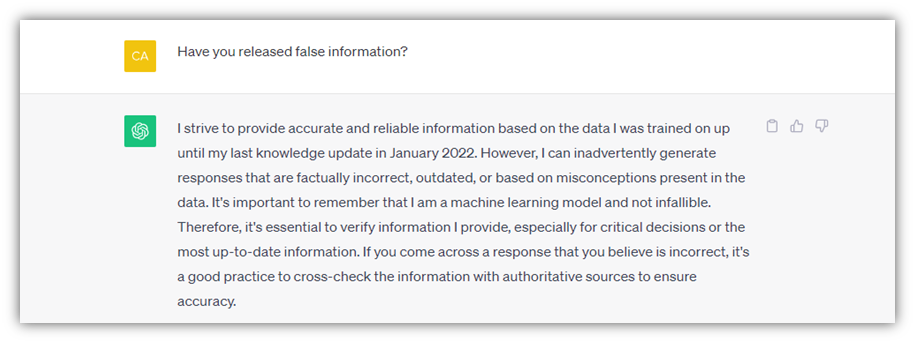

In the case of ChatGPT, a popular generative AI tool, it historically hasn’t been connected to the internet and has “limited knowledge of world and events after 2021.” However, this has changed in part when OpenAI released plugins earlier this year, enabling the AI-powered chatbot access to third-party applications for certain use cases. ChatGPT’s Terms of Use even warn that the information provided may not be accurate and that organizations and people need to do their due diligence:

“Artificial intelligence and machine learning are rapidly evolving fields of study. We are constantly working to improve our Services to make them more accurate, reliable, safe and beneficial. Given the probabilistic nature of machine learning, use of our Services may in some situations result in incorrect Output that does not accurately reflect real people, places, or facts. You should evaluate the accuracy of any Output as appropriate for your use case, including by using human review of the Output.”

Some examples that OpenAI talks about in its FAQs include making up facts, quotes, and citations (what the company refers to as “hallucinating”) and misrepresenting information.

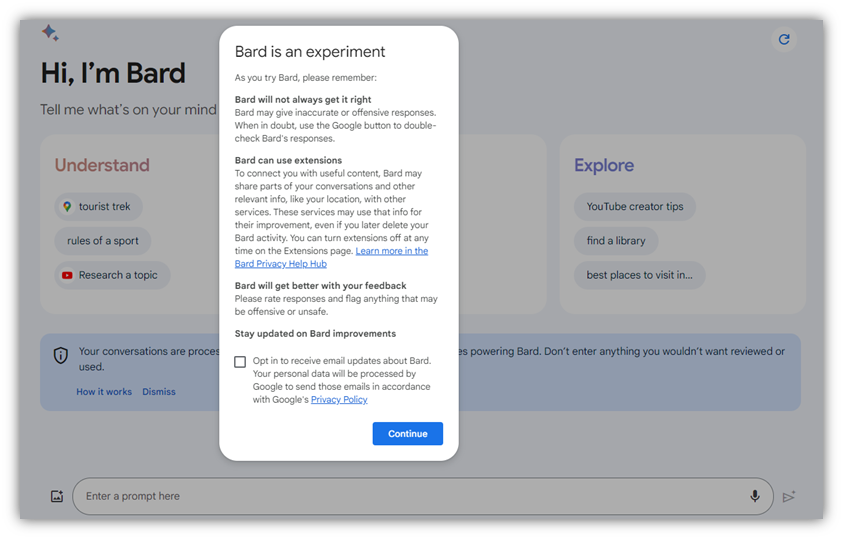

Google Bard is another example of an AI that admits its shortcomings:

Researchers at Stanford University and UC Berkeley point to clear differences between how two models of the same generative AI service can vary. In this case, they looked at GPT-3.5 and GPT-4 and identified significant fluctuation (i.e., drift) in the models’ abilities to perform diverse tasks across seven categories.

According to the study:

“We find that the performance and behavior of both GPT-3.5 and GPT-4 can vary greatly over time. For example, GPT-4 (March 2023) was reasonable at identifying prime vs. composite numbers (84% accuracy) but GPT-4 (June 2023) was poor on these same questions (51% accuracy). This is partly explained by a drop in GPT-4’s amenity to follow chain-of-thought prompting. Interestingly, GPT-3.5 was much better in June than in March in this task. GPT-4 became less willing to answer sensitive questions and opinion survey questions in June than in March. GPT-4 performed better at multi-hop questions in June than in March, while GPT-3.5’s performance dropped on this task. Both GPT-4 and GPT-3.5 had more formatting mistakes in code generation in June than in March. We provide evidence that GPT-4’s ability to follow user instructions has decreased over time, which is one common factor behind the many behavior drifts. Overall, our findings show that the behavior of the “same” LLM service can change substantially in a relatively short amount of time, highlighting the need for continuous monitoring of LLMs.”

3. Violating Personal Privacy Rights and Expectations

It’s no secret that people want to do things the easy way. This means that they may do stuff they shouldn’t to make their jobs easier and to save time. Unfortunately, this can result in sensitive information being shared outside a company’s internal systems that (ideally) are secure.

Protected Information May Be Shared Inadvertently

The University of Southern California’s Sol Price School of Public Policy talked about this generative AI risk to protected health information. There have been cases in which healthcare providers have entered patients’ HIPAA-protected information into chatbots to help generate reports and streamline processes. When they do this, they’re entering protected data into third-party systems that may not be secure and don’t have the patients’ content to process:

“The protected health information is no longer internal to the health system. Once you enter something into ChatGPT, it is on OpenAI servers and they are not HIPAA compliant. That’s the real issue, and that is, technically, a data breach.”

Furthermore, it’s not just the AI that may have access to what you share. Google Bard, Google’s answer to ChatGPT, warns users up front that conversations with the tool will be seen by human reviewers:

Although I’m sure Google has very lovely people working for the company, they’re not authorized to view patients’ protected health information (PHI).

Generative AI Tools Can Lead to Inadvertent Personal Data Disclosures

One of the significant concerns regarding the widespread adoption of generative AI and other AI technologies is the potential risks posed regarding privacy. Because generative AI uses vast quantities of data from many resources, some of that data will likely expose personal details, biometrics, or even location-related data about you.

In an article for the International Association of Privacy Professionals (IAPP), Zoe Argento, co-chair of the Privacy and Data Security Practice Group at Littler Mendelson, P.C., called out some of the generative AI risks and concerns regarding data disclosures:

“The generative AI service may divulge a user’s personal data both inadvertently and by design. As a standard operating procedure, for example, the service may use all information from users to fine-tune how the base model analyzes data and generates responses. The personal data might, as a result, be incorporated into the generative AI tool. The service might even disclose queries to other users so they can see examples of questions submitted to the service.”

Any time you click “accept” on one of those mile-long user agreements that companies, you’re clicking away your privacy rights without even realizing what you’re giving way. (Granted, even if you read it, unless you’re a lawyer, it doesn’t mean you’ll fully understand what’s being asked of you due to all of the “legalese” being thrown at you.) When companies say “affiliates” and “partners” may utilize your personal data and any other provided information, you have no clue what that means in terms of where your data is going or how it’s going to be used.

What are examples of privacy-related generative AI risks and concerns businesses should be aware of when working with these types of service providers? Argento identified three primary issues:

“The first concerns disclosure of personal data to AI tools. These disclosures may cause employers to lose control of the data and even result in data breaches. Second, data provided by generative AI services may be based on processing and collecting personal data in violation of data protection requirements, such as notice and the appropriate legal basis. Employers could potentially bear some liability for these violations. Third, when using generative AI services, employers must determine how to comply with requests to exercise data rights in accordance with applicable law.”

But how can you increase the privacy and security of data when using generative AI services?

- Evaluate every generative AI service provider to determine their potential risk levels based on their policies, practices, and other factors.

- Specify how your data is to be processed, stored, and destroyed.

- Ensure that the service provider’s processes meet regulatory data security and privacy requirements.

- Negotiate with the provider to get them to contractually agree to robust security and data privacy processes (and be sure to get it in writing).

4. Using Deepfakes to Shame and Attack Individuals

Generative AI tools have even been used to create deepfake pornographic materials (videos, images, etc.) that cause mental, emotional, reputational, and financial harm to victims. The personal likenesses of many celebrities, often females, are used to create fraudulent images and videos of them engaging in sexual acts. And as if that isn’t disturbing enough, this technology has also been used to create child pornography as well.

Of course, this technology isn’t only used to make false content of celebrities. It also opens the doors to everyday people being victimized by deepfake media.

Imagine that a woman breaks up with her fiancé. Her now-ex fiancé is angry about the situation and decides to take it out on her because he wants to cause her pain. He uses generative AI to create “revenge porn” (i.e., pornography of the other party that the victim doesn’t know about or consent to) and, unbeknownst to her, sends the video to her boss, friends, and family. Imagine the issues and humiliation she’ll face as a result of this targeted attack.

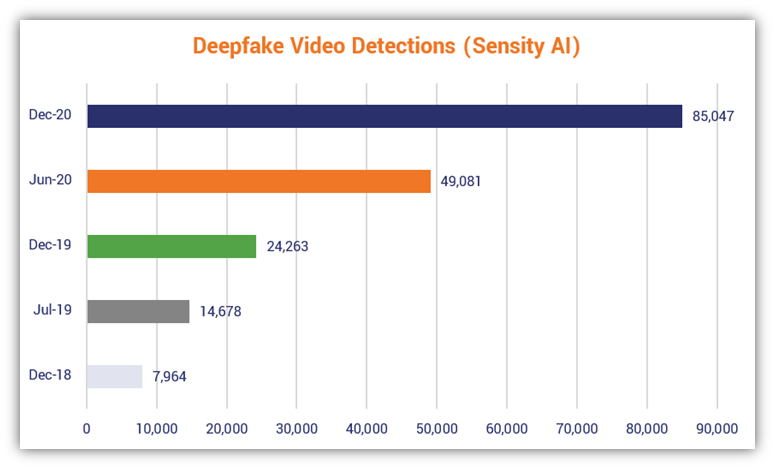

But just how big of an issue is it? A 2019 study by Deeptrace AI (now Sensity AI) found that of the 14,678 deepfake videos they found online, a whopping 96% were categorized as non-consensual pornography. The number of deepfakes Sensity AI found as of December 2020 jumped to more than 85,000. As you can see in the following chart, the number of deepfake videos online have essentially doubled every six months:

5. Developing New Cyber Attack Channels and Methods

Bad guys love generative AI tools because they can be used to carry out new attacks and make their jobs easier. Here are a few quick examples of some of ways that cybercriminals can use these technologies to do bad things:

Cybercriminals Can Craft More Believable Phishing Messages

Everyone knows the telltale signs of a common phishing email: unusual grammar, poor spelling, bad punctuation, etc. These messages target the low-hanging fruit (i.e., unsuspecting users who are ignorant of cyber awareness practices).

By eliminating the poor grammar and fixing the typos and punctuation you’re accustomed to seeing in most phishing emails, bad guys can make their messages more realistic. They can use these fraudulent messages to trick more victims into giving up sensitive data or doing something they’ll later regret. For example, they can create authentic-looking emails from your bank, a service provider, the IRS, or even your employer.

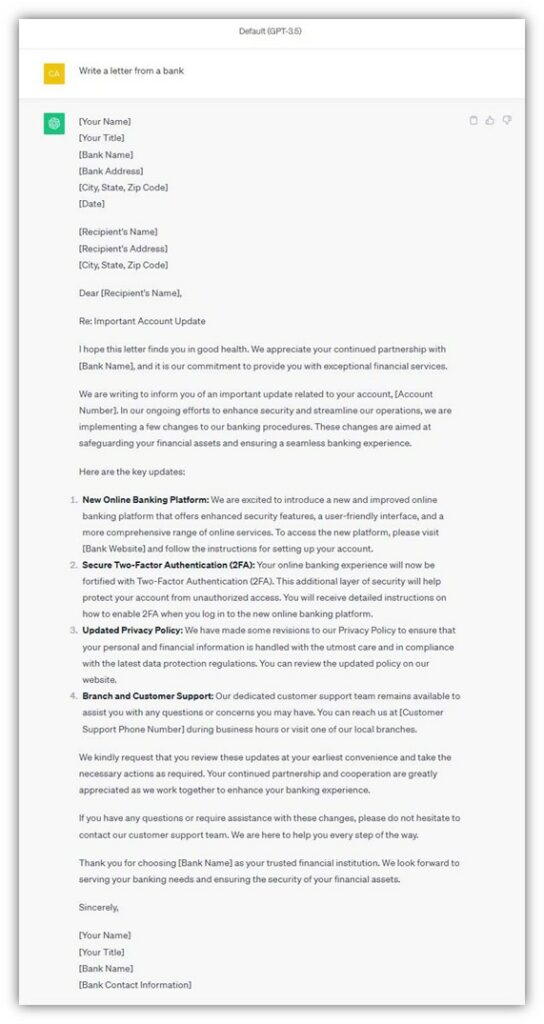

Here’s a quick example of a letter that ChatGPT threw together in less than 3 seconds:

Much of the information an attacker would fill out to make a phishing email more believable can be found on Google (company’s address and information, target’s name and address, bank representative’s name and title, etc.) They could then embed phishing links into the content to trick users into entering their credentials on a fraudulent login page.

Generative AI content can also help cybercriminals bypass traditional detection tools by using unique verbiage in lieu of the same repeated messages that email spam filters are programmed to search for.

Social Engineers and Other Bad Guys Can More Effectively Do Their “Dirty Deeds”

You know those annoying spam calls you receive with the auto recordings? Bad guys can use those calls as opportunities to record samples of your voice. These samples can then be used to generate fake dialogue — for example, they could use your voice to say a specific phrase required by a bank or financial institution or to make a short phone call to a subordinate.

According to research from Pindrop:

“[…] once an account is targeted by fraudsters in a phishing or account takeover attack, the likelihood of a secondary fraud call targeting that account within 60 days is between 33% and 50%. Even after 60 days have passed from the initial attack, the risk of fraud for a previously targeted account remains extremely elevated between 6% and 9%.

We also find that in most instances fraudsters are quite successful in passing the verification process when speaking with an agent. In analyzing our client data, we have found that fraudsters are able to pass agent verification 40% to 60% of the time.”

We’ve already seen examples of these types of scams happening over the past several years. In 2019, news organizations globally reported that a fraudster swindled €220,000 ($243,000) out of a company’s CEO in this way. They pretended to be the CEO’s superior from the firm’s parent company, using a deepfake recording of the boss’s voice.

Now, for some additional bad news: research from a study of 529 individuals published on PLOS One shows that one in four survey respondents couldn’t identify deepfake audio. Even when they were presented with examples of speech deepfakes, the results improved only marginally. If this survey group accurately represents, say, the population of the U.S., that means that 25% is susceptible to falling for deepfakes. That’s approximately 83,916,251 people!

Bad Guys Can Use Generative AI to Try to Identify Workarounds and Get Access to Secrets

Take a look at the examples shared by Mashable, which show that a cyber security researcher was able to figure out that ChatGPT and Google Bard would cough up Windows 10 Pro and Window 11 Pro keys, when prompted. They tricked them by incorporating their ask into a larger story.

Thankfully, in this case, Digital Trends reports that the keys he managed to get were generic license keys, meaning that they only provide restricted functionalities and certain features are limited.

Of course, license keys aren’t as big of a concern as other types of keys — say, cryptographic keys. But imagine if one of these generative AI tools managed to collect your cryptographic keys that were accidentally leaked in a GitHub post or stolen in a ransomware attack.

6. Generative AI Creates New Intellectual Property and Copyright Infringement Concerns

As we touched on earlier, generative AI technologies differ from traditional AI in that they on enormous datasets of existing materials to draw “inspiration” from based on patterns. This is why AI-generated poems can sound eerily human and, in some cases, even beautiful (although that’s not always the case).

But who owns AI-generated works? If a generative AI inputs different artists’ works as inspiration (i.e., to find patterns it can use with its algorithm), who owns or has rights to the resulting creative content?

This is something that’s still being “hashed out” within the industry, and even the U.S. Copyright Office is working to find a clear-cut answer. The Office extended its request for public comments on its Artificial Intelligence Notice of Query as part of its Artificial Intelligence initiative that started earlier this year. According to its Notice of Inquiry that was published in the Federal Register:

“To inform the Office’s study and help assess whether legislative or regulatory steps in this area are warranted, the Office seeks comment on these issues, including those involved in the use of copyrighted works to train AI models, the appropriate levels of transparency and disclosure with respect to the use of copyrighted works, and the legal status of AI-generated outputs.”

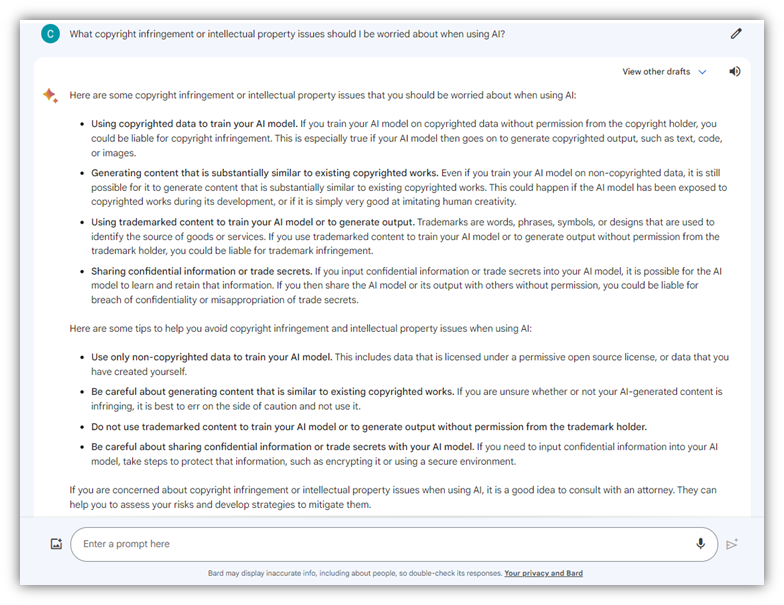

For fun, I asked Google Bard for its take on generative AI risks and concerns relating to IP and copyright issues:

An Overview of the Differences Between Generative AI and Traditional AI

Generative AI is a subcategory of AI that is built to create (generate) new content — images and illustrations, code, videos, etc. It relies on machine learning (ML), which is a type of artificial intelligence, to analyze and detect patterns in provided (existing) data, then create new content that follows similar patterns.

In a sense, generative AI reminds me of the Borg — a partially synthetic species of beings in the Star Trek universe that share one collective consciousness, one “hive mind.” The Borg Collective represents a formidable foe for anyone who comes across their path. Their technical advancements are due largely to the vast collective knowledge of thoughts, experiences, skills, technologies, and individual creativity that the assimilated individuals gained throughout their lives. Once assimilated, all of their knowledge, and even their individuality, is surrendered to the Collective. (“Resistance is futile.”)

Likewise, generative AI tools can be incredible resources for businesses, consumers, and content creators because they create new and interesting things by borrowing from the underlying structures and concepts created by others. As a result, generative AI tools help organizations save time, money, and resources.

For a neat example of how a company used generative AI in its marketing, check out how Cadbury created a plethora of local, personalized ads for consumers without having to reshoot hundreds or thousands of individual videos with a well-known Indian actor:

But as we’ve learned, there are many generative AI risks and issues that also must be considered. And now that we have an idea of what some of these risks are, let’s talk about what can be done to fix them.

What’s Being Done to Address Generative AI-Based Risks and Concerns

It’s virtually impossible to make an informed decision if you don’t know what’s real and what’s not. Businesses and governments alike are taking steps to combat malicious generative AI attacks and put the power of discernment back into the hands of consumers.

But these efforts are in their infancy — we’re just starting to learn what steps are needed, what steps will be helpful, and what seemingly good steps may backfire. It’s going to take awhile to figure out what we need to do to keep generative AI safe. Here are a few of the efforts underway…

EU Member Nations Are in Discussions About the AI Act

In 2021, the European Parliament proposed the AI Act, which requires disclosure when generative AI is being used. It also bans the use of AI for multiple applications, including:

- “Real-time” and “post” biometric identification and categorization systems (with exceptions for law enforcement requiring judicial authorization);

- Social scoring (i.e., classifying people based on personal characteristics or social behaviors);

- Facial recognition systems that are created by scraping CCTV footage and internet sources that violate a person’s right to privacy and other human rights;

- Emotion recognition systems that can lead to incorrect conclusions or decisions about a person; and

- Predictive policing systems that are based on certain types of data.

As of June 2023, Members of the European Parliament (MEPs) were beginning negotiations of the Act.

U.S. Leaders Call for Standards and Guidance Regarding the Secure and Safe Use of AI

On Oct. 30, the White House released President Biden’s Executive Order on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence, which aims to create standards, processes, and security mechanisms for the safe and secure use of AI technologies (including generative AI systems).

The executive order outlines several key priorities and guiding principles. Here’s a quick overview of each category; just keep in mind that there’s far more involved in each than what I can cover in a quick overview:

- Ensuring Safety, Security, and Trust Through Standards and Best Practices. The goal is to “Establish guidelines and best practices, with the aim of promoting consensus industry standards, for developing and deploying safe, secure, and trustworthy AI systems.” This involves establishing standards, developing a “companion resource” to the NIST Artificial Intelligence Risk Management Framework (AI RMF 1.0, also known as NIST AI 100-01) for generative AI (since the existing framework can’t address those risks) , and setting benchmarks.

- Promoting Innovation, Competition, and Collaboration. This aims to make it easier to attract and retain more AI talent by streamlining immigration processes, timelines, and criteria, establishing new AI research institutes, and creating guidance regarding patents of inventions involving AI and generative AI content.

- Advancing Equity and Civil Rights. The concern regarding some AI technologies is that it can lead to “unlawful discrimination and other harms[.]” This EO aims to recommend best practices and safeguards for AI uses by law enforcement, government benefits agencies and programs, and systems relating to housing and tenancy.

- Protecting American Consumers, Students, Patients, and Workers. This includes the incorporation of AI-related security, privacy, and safety standards and practices across various sectors, including “healthcare, public health, and human services,” education, telecommunication, and transportation.

- Protecting the Privacy of Americans. This is all about mitigating AI-based privacy risks relating to the collection and usage of data of individuals’ data. This entails establishing new and updated guidelines, standards, policies procedures, and incorporating privacy-enhancing technologies (PETs) “where feasible and appropriate.”

- Advancing U.S. Federal Government’s Use of AI. The goal here is to establish guidance and standards for federal agencies to safely and securely manage and use AI. This includes expanding training, recommending guidelines, safeguards and best practices, and eliminating barriers to responsible AI usage.

- Strengthening America’s Global Leadership Efforts. The idea is to be at the forefront of AI-related initiatives by leading collaborative efforts in the creation of AI-related frameworks, standards, practices, and policies.

It’s worth nothing that one of the things pointed out in the executive order is the development of security mechanisms that help consumers and users differentiate real from “synthetic” content and media. According to the EO: “[…] my Administration will help develop effective labeling and content provenance mechanisms, so that Americans are able to determine when content is generated using AI and when it is not.”

This brings us to our next point…

Organizations Globally Are Working Together to Create Standards for AI Media

In August, we introduced you to C2PA — what’s otherwise known as the Coalition for Content Provenance and Authenticity. This open technical standard aims to help everyday people identify and distinguish genuine images, audio, and video content from fake media using public key cryptography and public key infrastructure (PKI).

It’s a coalition that consists of dozens of industry leaders who, together, are working to create universal tools that can be used across a multitude of platforms to prove data provenance (i.e., historical data that proves the authenticity and veracity of media files and content).

The camera manufacturer Leica recently released its new M11-P camera, which has content credentials built into it to enable content authentication from the moment a photograph is taken.

U.S. Lawmakers Aim to Pave the Road for Future Legal Actions Against Offenders

While it’s true that PornHub, Twitter, and other platforms banned AI-generated pornographic content, it isn’t enough to ebb the flow of new (and harmful) content being created and distributed. Now, state and federal lawmakers are trying their hands at it.

H.R. 3106 (otherwise known as the “Preventing Deepfakes of Intimate Images Act”), A federal bill that was introduced to the U.S. House of Representatives’ Committee on the Judiciary, aims to prohibit the disclosure of nonconsensual intimate digital depictions. These are images or videos of a person that are created or altered using digital manipulation that feature sexually explicit or otherwise intimate content).

Those who commit crimes that fall under this bill could face civil actions that lead to payment to the victim for damages caused. They also may face imprisonment, fines, and other penalties, depending on the severity of their offenses.

The state of New York recently passed similar legislation. NY Governor Hochul signed into law Senate Bill S1042A, which is a law that prohibits the “unlawful dissemination or publication of intimate images created by digitization and of sexually explicit depictions of an individual.” The law amended a few subdivisions of the state’s penal law and repealed another.

Final Thoughts on Generative AI Risks and Dangers

Generative AI is quickly evolving and holds a lot of promise in terms of its capabilities. In addition to reducing costs and speeding up some creative processes, these tools offer unique takes on content creation because they’re building on patterns identified within existing media and content.

Do generative AI risks outweigh the benefits? That’s still up for debate. But what isn’t up for debate is that generative AI is here and is part of our world now. As more businesses and users adopt these technologies, they have an ever-increasing responsibility to ensure that they’re using these generative tools responsibly. They must use them in a way that promotes safety, security, and trust and doesn’t cause harm (intentionally or otherwise).

5 Ways to Determine if a Website is Fake, Fraudulent, or a Scam – 2018

in Hashing Out Cyber SecurityHow to Fix ‘ERR_SSL_PROTOCOL_ERROR’ on Google Chrome

in Everything EncryptionRe-Hashed: How to Fix SSL Connection Errors on Android Phones

in Everything EncryptionCloud Security: 5 Serious Emerging Cloud Computing Threats to Avoid

in ssl certificatesThis is what happens when your SSL certificate expires

in Everything EncryptionRe-Hashed: Troubleshoot Firefox’s “Performing TLS Handshake” Message

in Hashing Out Cyber SecurityReport it Right: AMCA got hacked – Not Quest and LabCorp

in Hashing Out Cyber SecurityRe-Hashed: How to clear HSTS settings in Chrome and Firefox

in Everything EncryptionRe-Hashed: The Difference Between SHA-1, SHA-2 and SHA-256 Hash Algorithms

in Everything EncryptionThe Difference Between Root Certificates and Intermediate Certificates

in Everything EncryptionThe difference between Encryption, Hashing and Salting

in Everything EncryptionRe-Hashed: How To Disable Firefox Insecure Password Warnings

in Hashing Out Cyber SecurityCipher Suites: Ciphers, Algorithms and Negotiating Security Settings

in Everything EncryptionThe Ultimate Hacker Movies List for December 2020

in Hashing Out Cyber Security Monthly DigestAnatomy of a Scam: Work from home for Amazon

in Hashing Out Cyber SecurityThe Top 9 Cyber Security Threats That Will Ruin Your Day

in Hashing Out Cyber SecurityHow strong is 256-bit Encryption?

in Everything EncryptionRe-Hashed: How to Trust Manually Installed Root Certificates in iOS 10.3

in Everything EncryptionHow to View SSL Certificate Details in Chrome 56

in Industry LowdownA Call To Let’s Encrypt: Stop Issuing “PayPal” Certificates

in Industry Lowdown