Quantum Computing Just Took a Big Step Forward

Error correction is an issue that’s been plaguing quantum computing research for decades. Now, researchers from Harvard, MIT, and other leading organizations say they’ve found a way to improve error correction that may advance the clock on how soon we’ll need quantum-resistant cryptography.

For years, we’ve heard the oft-repeated phrase “quantum is coming.” Quantum computing is reminiscent of the phrase “Winter is Coming” from the Game of Thrones HBO series in that, much like the Night King and White Walkers, it often conjures a sense of dread in cybersecurity professionals.

Sure, quantum computing would mark one of the most significant technological advancements of our lifetime — it’s poised to solve global problems. It could be capable of near-instantaneous diagnoses or, perhaps, cures for (currently) incurable diseases. But in addition to immense opportunities, quantum computing also poses significant threats in the form of its immense processing power and ability to break public key cryptography.

Simply put, having broken public key algorithms means that potentially decades’ worth of personal and otherwise sensitive data could be exposed and exploited via harvest now, decrypt later (HNDL) attacks. Research from Deloitte shows that more than half (50.2%) of cybersecurity professionals are concerned about these attacks, which could lay bare everything from social security numbers and personal health records to trade secrets and intellectual property.

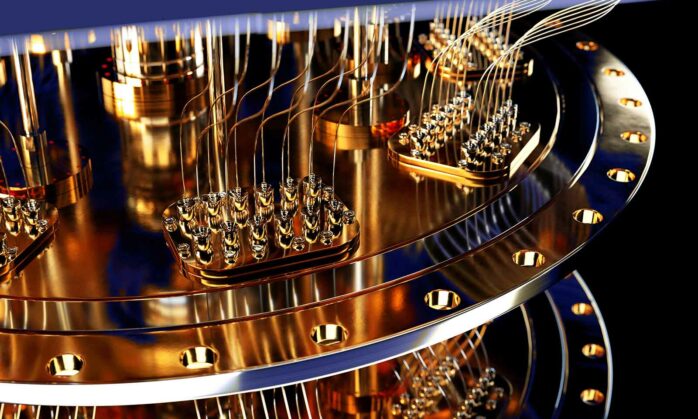

Although quantum computers have been on the horizon for decades, they’ve yet to truly arrive. However, according to a recent paper published in Nature, that future reality may change sooner than expected. Researchers working on the Defense Advanced Research Projects Agency’s (DARPA) Optimization with Noisy Intermediate-Scale Quantum devices (ONISQ) program were able to develop a first-ever quantum circuit that operates using logical quantum bits (i.e., qubits, pronounced “que bits”).

Not sure what that means? That’s what we’re here for! We’ll look under the hood to better understand what this breakthrough entails, how it’s poised to impact your business (down the road), and what you can do to start preparing for quantum computers now.

Let’s hash it out.

TL;DR: A 60-Second Overview of What’s Occurred (And Why You Should Care)

In a nutshell, a crack team of researchers from Harvard, Massachusetts Institute of Technology (MIT), California Institute of Technology (CalTech), National Institute of Standards and Technology (NIST), QuEra Computing, and Princeton has found a way to create a quantum processor with the highest known number of logical qubits. (Logical qubits are more controllable than physical qubit-based systems and can better correct errors in quantum computations.)

So, how’d they do it? In part, by using atomic particles and lasers. While it may not be as cool as “sharks with freakin’ laser beams attached to their heads,” the thought here is that their research could open the door to more research that will enable the scaling and capitalization of logical qubit devices.

If other researchers can replicate and build upon their research in the future, it means that quantum computers could be on our doorsteps sooner — no telling how much earlier — than expected. And with that incredible advancement comes the threats that quantum computers pose to data that’s been encrypted using classical public key encryption algorithms (e.g., RSA).

Of course, taking any step forward in quantum computing presents many challenges, not the least of which are error corrections. That’s why a key part of their researchers’ goal was to see if their quantum processor could be used for error corrections, and it seems the answer is yes.

What’s the Deal With Error Corrections in Quantum Computing?

One of the biggest issues in quantum research is finding and fixing errors. Traditional error corrections involve spreading information across many physical qubits; this way, if one fails, you have others that help prevent corruption of the underlying logical information.

In this case, the researchers took a new approach to error correction, creating a circuit of 48 error-corrected logical qubits. This was done using arrays with up to 280 “noisy” (i.e., error prone) physical Rydberg atomic qubits. (More on Rydberg atomic qubits in just a few moments.)

While that may not sound like a lot, let’s take a moment to understand the significance of this discovery. Historically, researchers globally have only been able to realize 1-2 logical qubits at a time. That’s because modern error-correcting methods are estimated to take upwards of 1,000 physical qubits to form a single logical qubit. So, the new research was able to achieve 48 logical qubits using up to 280 physical bits. That’s quite a difference.

According to the free published preview draft of the research journal article (which we linked to in the intro):

“In addition to error-detecting benefits, it appears the logical circuit is significantly more tolerant to coherent errors, exhibiting operation that is inherently digital, just with imperfect fidelity […] consistent with theoretical predictions.”

So, in addition to achieving greater error detection capabilities, the researchers say the logical qubit circuit handles coherent errors more effectively, aligning with their expectations.

For a more in-depth look at the research and data, be sure to read the Nature article.

A Quick Review of How Quantum Computers Use Qubits to Operate

Qubits are the most fundamental units in quantum computing. Relying on quantum mechanics, they’re used to convey information in these advanced systems. To put it simply, classical (modern) bits comprise either 0 or 1; qubits, on the other hand, can exist in a superposition and represent “numerous possible combinations of 0 and 1” simultaneously.

There are multiple types of qubits that fall within two categories.

- Physical qubits. These generally refer to the quantum hardware that comprises these delicate machines. They’re error prone, generate a lot of heat, and are difficult to manage; this is in part why they’re typically found in controlled, refrigerated environments at a near-absolute zero temperature (-459 degrees Fahrenheit) to help minimize errors. (Clearly, we’re not talking about your momma’s garage chest freezer here.)

- Logical qubits. These, on the other hand, are higher-level, error-corrected abstractions that are created using arrays of physical qubits. Because they’re able to better maintain their quantum state, they form the foundation of fault-tolerant quantum algorithms, which are integral to complex problem-solving in quantum computing.

Some quick examples of physical and logical qubits include photonic qubits, superconducting qubits, Rydberg atomic qubits, and trapped ion qubits. According to Microsoft Azure Quantum, superconducting is the most commonly used type of qubit you’ll find in quantum computing systems.

So, Why’d the Researchers Use Rydberg Atoms?

Rydberg qubits differ from traditional qubit operations, which are arranged sequentially and tend to lack uniformity in terms of their individual characteristics.

According to DARPA’s announcement of the research publication, the Rydberg atomic qubits aren’t like others in that they’re homogenous (i.e., they’re similar or uniform in sharing common characteristics) and behave the same way on a quantum chip. This predictability is thought to make these qubits easier to scale, manipulate, control, and move to perform operations.

Why Taking a New Approach to Error Correction Matters

One of the major challenges facing quantum computing is locating and correcting errors. Qubits must be arranged in a way that enables them to maintain their superposition and state. When they don’t, this results in one or more errors.

Being able to locate and correct these errors quickly is what is thought to be the path forward for quantum computing scalability. Traditionally, this process hasn’t been easy using physical qubits and requires many resources. According to an article from Princeton on separate research relating to locating errors (via biases):

“The central obstacle to the future development of quantum computers is being able to correct for these errors. However, to correct an error, you first have to figure out if an error occurred, and where it is in the data. And typically, the process of checking for errors introduces more errors, which have to be found again, and so on.”

Basically, the bigger you go in terms of the number of qubits involved, the more errors that generally result.

Is Quantum Computing Just Around the Corner? Not Quite

So, if researchers have now realized circuits with dozens of logical qubits, does this mean that the ability to scale up these systems is just around the corner? Are we on the cusp of quantum computers popping up any day now? Not necessarily. However, the DARPA project’s researchers certainly believe that we’re a lot closer now than we were at the start of 2023.

According to a release from DARPA:

“While it’s anticipated that at least an order of magnitude greater than 48 logical qubits will be needed to solve any big problems envisioned for quantum computers, the Rydberg logical qubit breakthrough casts new light on the traditional view that millions of physical qubits are needed before a fault-tolerant quantum computer can be developed. Given the prospect of dynamically reconfigurable quantum circuits, it’s too early to say how many logical qubits are needed to solve a particular problem; but it potentially could be far fewer than originally thought.”

But even though scalable quantum capabilities are still likely years away, it doesn’t mean that industry leaders aren’t working on ways to combat the threats they pose now. And for good reason. If quantum computers become widespread and we don’t have the cryptographic foundation to replace the modern-day public key encryption we rely on to secure data, then public and private sector organizations (and the private individuals they serve) will be in for a world of pain.

In November, we shared key takeaways from the PKI Consortium’s second Post-Quantum Cryptography conference. Here, experts from around the world gathered in Amsterdam to discuss ongoing projects and research, including updates relating to NIST’s first set of three post-quantum cryptography (PQC) algorithms, which are set to be released in 2024. These cryptographic standards aim to protect data against classical computer threats as well as provide resistance against quantum computing-based threats of the future.

Why You Should Start Preparing Now for Quantum-Resistant Cryptography

The breakthrough described in this research is a big deal because it brings us potentially a few steps closer to creating quantum computing systems that can solve complex issues and handle complex tasks without running into the traditional errors and mistakes we’re accustomed to seeing.

So, are quantum computers going to become widespread in 3 years? 13? 30? No one can say with any certainty. The point for businesses and organizations to be aware of is that they should be taking the time now to prepare for what’s to come when (not if) it does eventually happen.

- Switch to using hybrid cryptographic algorithms. The goal here is to protect your data against modern threats and help protect your data against future PQC threats. Using classical cryptographic algorithms alone won’t help you do that because they’ll secure your data against classical attacks but will do jack when it comes to advanced quantum-based threats.

- Assess your existing IT infrastructure. Take the time now to determine what systems and hardware you have in place and identify what you’ll need to upgrade or replace in the future to support quantum-resistant cryptography.

- Automate management of your cryptographic assets. Knowing which cryptographic assets you have and where they’re deployed is crucial to data security. If you don’t know what digital certificates and cryptographic keys you have, who’s in charge of them, and where to find them, you’re in for a world of hurt.

Check out additional resources on quantum-resistant cryptography:

5 Ways to Determine if a Website is Fake, Fraudulent, or a Scam – 2018

in Hashing Out Cyber SecurityHow to Fix ‘ERR_SSL_PROTOCOL_ERROR’ on Google Chrome

in Everything EncryptionRe-Hashed: How to Fix SSL Connection Errors on Android Phones

in Everything EncryptionCloud Security: 5 Serious Emerging Cloud Computing Threats to Avoid

in ssl certificatesThis is what happens when your SSL certificate expires

in Everything EncryptionRe-Hashed: Troubleshoot Firefox’s “Performing TLS Handshake” Message

in Hashing Out Cyber SecurityReport it Right: AMCA got hacked – Not Quest and LabCorp

in Hashing Out Cyber SecurityRe-Hashed: How to clear HSTS settings in Chrome and Firefox

in Everything EncryptionRe-Hashed: The Difference Between SHA-1, SHA-2 and SHA-256 Hash Algorithms

in Everything EncryptionThe Difference Between Root Certificates and Intermediate Certificates

in Everything EncryptionThe difference between Encryption, Hashing and Salting

in Everything EncryptionRe-Hashed: How To Disable Firefox Insecure Password Warnings

in Hashing Out Cyber SecurityCipher Suites: Ciphers, Algorithms and Negotiating Security Settings

in Everything EncryptionThe Ultimate Hacker Movies List for December 2020

in Hashing Out Cyber Security Monthly DigestAnatomy of a Scam: Work from home for Amazon

in Hashing Out Cyber SecurityThe Top 9 Cyber Security Threats That Will Ruin Your Day

in Hashing Out Cyber SecurityHow strong is 256-bit Encryption?

in Everything EncryptionRe-Hashed: How to Trust Manually Installed Root Certificates in iOS 10.3

in Everything EncryptionHow to View SSL Certificate Details in Chrome 56

in Industry LowdownA Call To Let’s Encrypt: Stop Issuing “PayPal” Certificates

in Industry Lowdown