SSL Offloading: What is it? How does it work? What are the benefits?

What is SSL Offloading? Performing SSL at the Load Balancer level.

Today we’re going to cover a question that comes up from time to time, and may seem especially foreign to people without an IT background: What is SSL offloading? We’ll give a quick overview of what SSL offloading means, why you may want to do it and whether you should.

One of the misnomers about SSL/TLS and really with the way the internet works in general is that it’s a 1:1 connection. A person’s computer connects directly with a web server and communication goes directly from one to the other. In reality, it’s far more complicated than that, with sometimes upwards of a dozen stops between end points.

That’s an important piece of information to keep in mind as we start getting into SSL offloading.

So, what is SSL offloading and how does it work?

Let’s hash it out…

What is SSL offloading?

![]() Before TLS 1.3, even before TLS 1.2, frankly, SSL/TLS used to legitimately add latency to connections. That’s what lent itself to the perception that SSL/TLS slowed down websites. Ten years ago, that was the knock on SSL certificates. “Oh they slow down your site.” And that was true at the time.

Before TLS 1.3, even before TLS 1.2, frankly, SSL/TLS used to legitimately add latency to connections. That’s what lent itself to the perception that SSL/TLS slowed down websites. Ten years ago, that was the knock on SSL certificates. “Oh they slow down your site.” And that was true at the time.

It’s not today, but in the past SSL/TLS was considered a bit resource hungry. For starters, you have the SSL/TLS handshake. It’s been refined to where it’s now a single roundtrip in TLS 1.3, but before that it took several roundtrips. Then, following the handshake, additional processing power had to be exerted to encrypt and decrypt the data being transmitted. As the additional load from SSL/TLS increases on the server, it’s no longer able to process at full capacity.

Again, a lot of this has been cleaned up in TLS 1.3, and HTTP/2 – which requires the use of SSL/TLS – helps to increase performance even more, but even with all of those improvements, SSL/TLS can still add latency with higher volumes of traffic.

So, what is SSL offloading? Well, to help offset the extra burden SSL/TLS adds, you can spin up separate Application-Specific Integrated Circuit (ASIC) processers that are limited to just performing the functions required for SSL/TLS, namely the handshake and the encryption/decryption. This frees up processing power for the intended application or website. That’s SSL offloading in a nutshell. Sometimes it’s also called load balancing. You may hear the term load balancer tossed around. A load balancer is any device that helps improve the distribution of workloads across multiple resources, for instance distributing the SSL/TLS workload to ASIC processors.

What are the advantages of SSL offloading?

SSL offloading has several benefits:

SSL offloading has several benefits:

- It offloads additional tasks from your application servers so they can focus on their primary functions.

- It saves resources on those application servers.

- And, depending on what load balancer you’re using, it can also help with HTTPS inspection, reverse-proxying, cookie persistence, traffic regulation, etc.

That last one is one of the most important: that in some cases SSL offloading can assist with traffic inspection. As important as encryption is, it has one major drawback: attackers can hide in your encrypted traffic. There’s no shortage of high-profile exploits that have occurred as a result of attackers hiding in HTTPS traffic, recently Magecart has been using HTTPS traffic to obfuscate the PCI it’s been exfiltrating from various payment pages.

Being able to inspect HTTPS traffic becomes almost compulsory once your organization reaches a certain size, and one of the best ways to do that is to offload your SSL/TLS processes.

How does SSL offloading work?

When we talk about SSL offloading there are two different ways to accomplish it:

- SSL Termination

- SSL Bridging

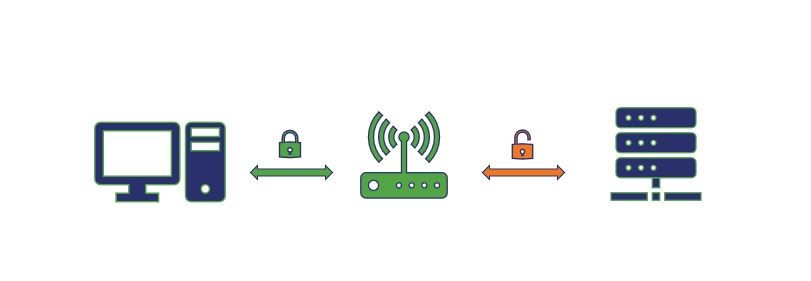

Let’s start with SSL termination first because it’s a little bit simpler. Essentially it works this way, the proxy server or load balancer you use for the SSL offloading acts as the SSL terminator, which also acts as an edge device. When a client attempts to connect to a website, the client connects to the SSL terminator—that connection is HTTPS. But the connection between the SSL terminator and the application server is via HTTP.

Now, you may be asking how that doesn’t cause problems with the browser, it’s because the HTTP connection is taking place behind the scenes – on the internal network, protected by firewalls – the client still has a secure connection with the SSL terminator, which is acting as a pass-through.

Here’s a visualization of SSL Termination:

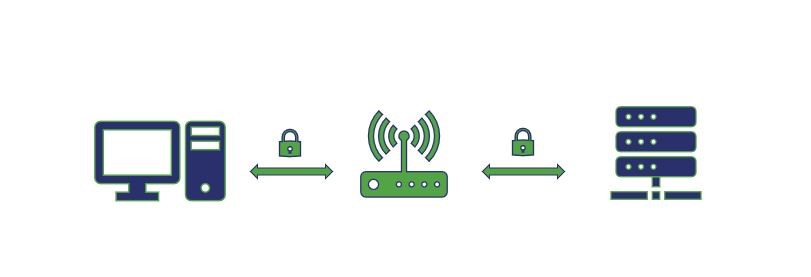

SSL Bridging is extremely similar conceptually, except rather than sending the traffic and requests on via HTTP, it re-encrypts everything before sending it to the application server.

Here’s a visualization of SSL Bridging:

Both allow you to perform traffic inspection and can help tremendously when you’re dealing with high volumes of traffic on larger networks.

Bear in mind, encryption is an incredibly CPU-intensive task. When the industry migrated from 1024-bit RSA keys to 2048-bit ones, the CPU-usage involved increased somewhere between 4-7 times depending on server. We’ll likely never even get to 4096-bit keys because frankly, at that point the increase in cpu-usage isn’t consummate to the improvement in security. That’s why we’re seeing a push towards more elliptic curve-based cryptosystems.

So let’s cover one last item: should you consider SSL offloading?

And frankly, that all comes down to you, your website and what you’re trying to do. A large media site like an ESPN or a CNN would be well-suited to use a load balancer owing to the volume of traffic they both handle. On the other hand, if you’re just running a website for a local bakery, you’d probably be fine just letting your server handle everything—especially with the improvements made by TLS 1.3.

That’s all for today, as always, leave any comments or questions below.

![A Look at 30 Key Cyber Crime Statistics [2023 Data Update]](https://www.thesslstore.com/blog/wp-content/uploads/2022/02/cyber-crime-statistics-feature2-75x94.jpg)

5 Ways to Determine if a Website is Fake, Fraudulent, or a Scam – 2018

in Hashing Out Cyber SecurityHow to Fix ‘ERR_SSL_PROTOCOL_ERROR’ on Google Chrome

in Everything EncryptionRe-Hashed: How to Fix SSL Connection Errors on Android Phones

in Everything EncryptionCloud Security: 5 Serious Emerging Cloud Computing Threats to Avoid

in ssl certificatesThis is what happens when your SSL certificate expires

in Everything EncryptionRe-Hashed: Troubleshoot Firefox’s “Performing TLS Handshake” Message

in Hashing Out Cyber SecurityReport it Right: AMCA got hacked – Not Quest and LabCorp

in Hashing Out Cyber SecurityRe-Hashed: How to clear HSTS settings in Chrome and Firefox

in Everything EncryptionRe-Hashed: The Difference Between SHA-1, SHA-2 and SHA-256 Hash Algorithms

in Everything EncryptionThe Difference Between Root Certificates and Intermediate Certificates

in Everything EncryptionThe difference between Encryption, Hashing and Salting

in Everything EncryptionRe-Hashed: How To Disable Firefox Insecure Password Warnings

in Hashing Out Cyber SecurityCipher Suites: Ciphers, Algorithms and Negotiating Security Settings

in Everything EncryptionThe Ultimate Hacker Movies List for December 2020

in Hashing Out Cyber Security Monthly DigestAnatomy of a Scam: Work from home for Amazon

in Hashing Out Cyber SecurityThe Top 9 Cyber Security Threats That Will Ruin Your Day

in Hashing Out Cyber SecurityHow strong is 256-bit Encryption?

in Everything EncryptionRe-Hashed: How to Trust Manually Installed Root Certificates in iOS 10.3

in Everything EncryptionHow to View SSL Certificate Details in Chrome 56

in Industry LowdownPayPal Phishing Certificates Far More Prevalent Than Previously Thought

in Industry Lowdown